Smart Surveys: How to Conduct an Online Survey in 7 Steps (2026)

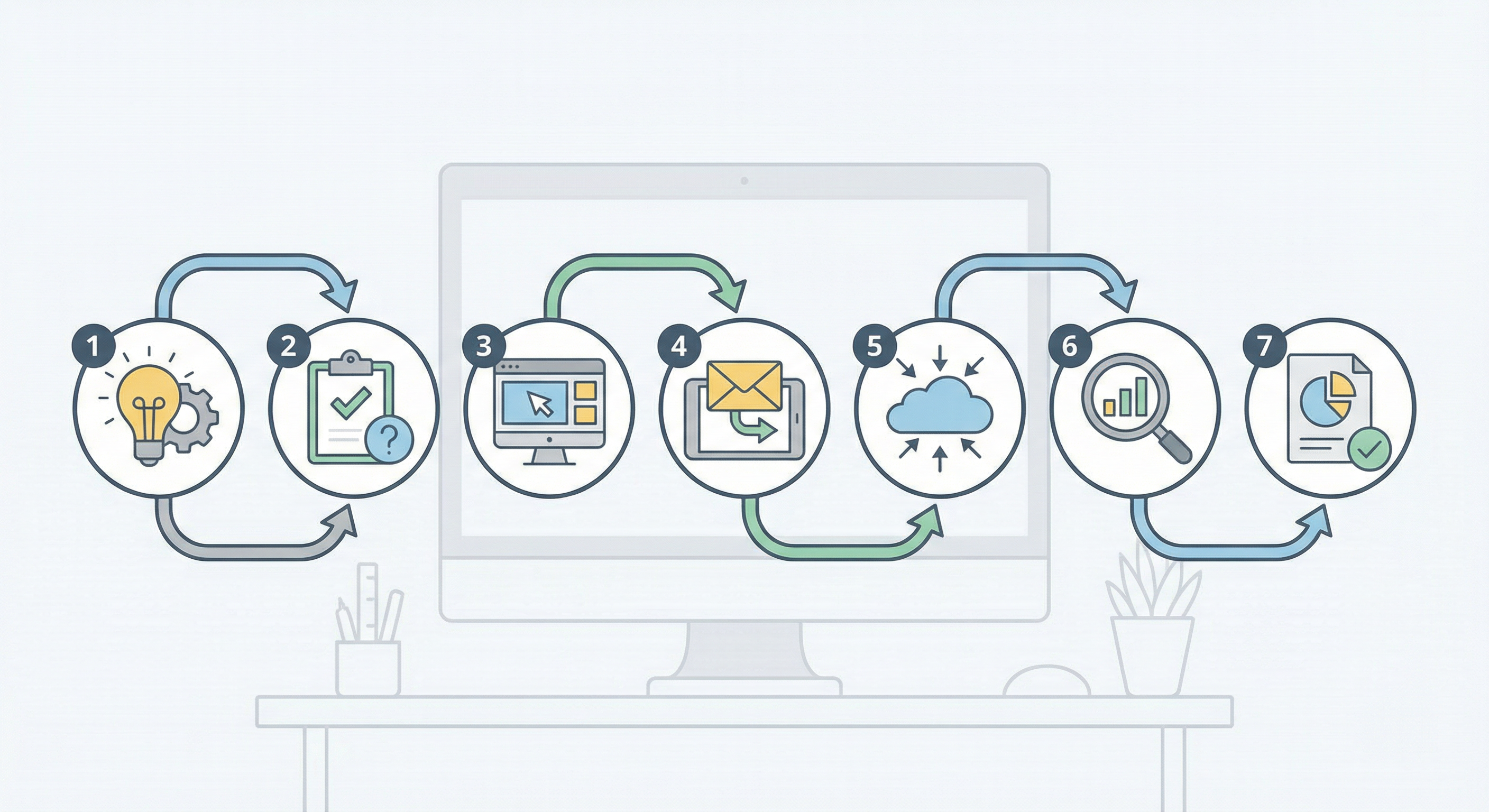

Online surveys are the bridge between your business and your audience’s needs—when they’re run well. Conducting a survey in 2026 means treating it like a conversation: clear goals, conversational questions, logic that respects the respondent’s time, and a plan to act on the data. This guide walks you through 7 steps: (1) Set clear research goals. (2) Design conversational questions (mix types; aim for under 6 questions when possible—more can tank completion). (3) Add conditional logic so users only see relevant questions. (4) Invite participants (email, in-app, link, QR). (5) Monitor response and drop-off; fix friction. (6) Analyze (quantitative + qualitative). (7) Close the loop—apply learning and tell respondents. You will also see strong vs weak execution, common pitfalls, a pre-launch checklist, and how to tie the process to high-impact survey design and form analytics. For survey design depth, see high-impact surveys: 12 best practices and how to build surveys that get 80%+ response rates. For form analytics, see form analytics: what metrics actually matter. For the difference between surveys and questionnaires, see survey vs questionnaire: what is the difference. For AI-assisted survey design and analysis, see smarter surveys: modern guide to AI-powered surveys.

Why these 7 steps work

The sequence is deliberate: goal first prevents scope creep and keeps the survey short. Design before logic ensures you have the right questions before you branch them. Invite and monitor tie the survey to the real world—channel choice and drop-off data determine who responds and where they leave. Analyze and close the loop turn data into decisions and loyalty. Skipping any step (e.g. launching without a goal, or collecting data without a debrief plan) weakens the whole process. Treat the 7 steps as a repeatable workflow: each survey improves when you iterate on the previous one using the same framework. Rough timeline: Steps 1–3 (goal, design, logic) can take 30–90 minutes for a short survey; Step 4 (invite) depends on your channel and list; Step 5 (monitor) runs in parallel with the field period; Steps 6–7 (analyze, close loop) should be scheduled before launch (e.g. “We will analyze within one week and email participants within two”). Defining the timeline upfront prevents the survey from stalling after data collection. One-page brief before you start: Write down (1) North Star goal, (2) target audience (who should take it), (3) channel (email, in-app, link), (4) field period (how long it stays open), (5) who owns analysis and closing the loop. Share the brief with stakeholders so everyone is aligned before you draft questions.

The 7 steps in brief

1. Set clear research goals — One “North Star” decision (e.g. reduce churn, validate feature). If a question doesn’t serve it, drop it.

2. Design conversational questions — Multiple choice, scales, ranking, sparing open-ended. Research shows surveys with more than six questions often see big completion drops.

3. Personalize with logic — Conditional logic: if they haven’t used the mobile app, don’t show five mobile UI questions.

4. Invite survey participants — Email for deep feedback; QR or social link for reach.

5. Monitor and optimize — Watch drop-off by question; fix confusing or intrusive items. Response rate above 30% is often strong; conversational flows can push higher.

6. Analyze results — Quantitative (charts, trends) and qualitative (themes in open-ended).

7. Close the feedback loop — Fix what you learned, then tell users. That turns a survey into a loyalty tool.

The sections below unpack each step with implementation details, common pitfalls, and how to tie the process to high-impact survey design. For design principles in depth, see high-impact surveys: 12 best practices. For question types and wording, see the anatomy of a question.

Step 1: Set clear research goals

Every online survey should support one primary decision or objective. Before you write a single question, state it in one sentence: e.g. “We need this survey to decide whether to add feature X,” or “We need to understand why churn increased in Q2.” That becomes your North Star: any question that does not directly inform that decision is out of scope. Dropping “nice to have” questions keeps the survey short and completion high. Get stakeholder sign-off on the goal so that when someone asks to add extra items, you can refer back to the goal and say no. Example: If the North Star is “Decide whether to invest in feature X next quarter,” then every question should inform that—e.g. how often users hit the problem feature X solves, how they work around it today, and willingness to pay or prioritize. Questions about overall satisfaction or unrelated demographics stay out unless they are needed to segment the feature decision. For more on goal-driven design, see high-impact surveys: 12 best practices.

Step 2: Design conversational questions

Conversational means clear, simple language and a mix of question types that feel like a dialogue, not a form. Use multiple choice, single choice, scales (e.g. 1–5 or NPS), and ranking where order matters; add open-ended sparingly (one or two questions) where you need qualitative depth. Research shows that surveys with more than six questions often see large completion drops; aim for a short path and use conditional logic (Step 3) so each respondent sees only relevant items. As a rule of thumb, 5–10 closed-ended questions plus 0–1 open-ended often fits in under two minutes; with branching, you can offer more questions while showing each person a shorter path. Avoid jargon, double-barreled questions (one idea per question), and leading wording. Example: Instead of “Please rate the following aspects of our solution: ease of use, value for money, and customer support” (three ideas in one), ask three separate questions or use a short grid with one idea per row. For product feedback in particular, see 10 essential product survey questions for better feedback. For question types and best practices, see the anatomy of a question and demographic survey questions: guide, examples, and best practices. For qualitative vs quantitative balance, see the research compass: qualitative vs quantitative data.

Step 3: Add conditional logic

Conditional logic (branching or skip logic) shows follow-up questions only when they are relevant. For example: if the respondent has not used the mobile app, skip the five mobile UI questions; if they chose “Other,” show a single open-ended box. That shortens the path, reduces fatigue, and improves completion. Use logic for screeners (qualify who should take the survey), for follow-ups to specific choices, and for sections that apply only to a subset. Example branching: “Have you used our mobile app in the last 30 days?” → If No, skip the next five questions about mobile UI and go to “What would make you try the mobile app?” → If Yes, show the five mobile UI questions, then continue. That way each respondent sees only what applies to them. Form builders like AntForms support conditional logic without coding. For examples of logic in lead qualification and forms, see conditional logic examples for lead qualification.

Step 4: Invite participants

Choose the channel that matches your goal and audience. Email works well for deep feedback from known users (e.g. post-purchase, post-support). In-app or in-product messages reach active users at the right moment. Link or QR on receipts, packaging, or at events can boost reach. In the invite, state how long the survey takes and how you will use the data; that builds trust and sets expectations. Avoid sending the same survey too often to the same people; space out requests to protect response rates. When to use which channel:

| Channel | Best for | Survey length | Notes |

|---|---|---|---|

| Known contacts, post-purchase, support, beta | Short to medium (e.g. 2–5 min) | Include time estimate and how you will use data | |

| In-app / in-product | Active users at a relevant moment | Short (e.g. 1–2 min) | Trigger after key action; avoid interrupting too often |

| Link / QR / social | Events, packaging, reach | Very short (e.g. under 1 min) | Noisier; keep to few questions and clear CTA |

Match the channel to survey length: short pulse on a noisy channel, longer survey only when you have attention (e.g. email with a clear time estimate). For tactics to maximize response, see how to build surveys that get 80%+ response rates.

Step 5: Monitor and optimize

Watch completion rate and drop-off by question. If many people abandon at a specific question, it may be confusing, too sensitive, or too long. Use form analytics (e.g. form analytics: what metrics actually matter) to see where friction is. Response rate above 30% is often strong for voluntary surveys; conversational design and the right channel can push higher. Fix obvious issues mid-campaign if possible (e.g. clarify wording); for the next wave, shorten or reorder based on drop-off data. When drop-off is high at one question: Consider whether it is too long (e.g. a big grid), too sensitive (e.g. income or personal data), or poorly worded (e.g. double-barreled or jargon). Move sensitive items to the end, split long grids, or simplify the question. If drop-off is high at the start, the invite or first question may not set expectations well—add a time estimate or a warmer opener. Iteration turns a one-off survey into a learning system. Response rate benchmarks: For voluntary, non-incentivized surveys, 20–30% is common; 30%+ is often considered strong. Incentives, a very short survey, or a highly engaged audience (e.g. power users) can push 40% or higher. Do not fixate on a single number; use your baseline and improve over time by applying the 7 steps (especially short path, right channel, and closing the loop).

Step 6: Analyze results

Analyze quantitative data first: counts, percentages, trends by segment (e.g. by role, by usage). Use charts and simple stats to see what the majority says and where segments differ. Then mine qualitative data: themes in open-ended responses, recurring phrases, and sentiment. Triangulate: do the numbers and the quotes tell the same story? Summarize in a short report or deck: key findings, 2–3 recommendations, and suggested owners. If you have open-ended responses, tag or code themes (e.g. “pricing,” “ease of use,” “support”) and count how often each appears; that quantifies qualitative data and makes it easier to present. If you use AI to summarize open-ended responses, still spot-check for accuracy and bias; human review keeps the analysis trustworthy. For customer feedback question ideas, see mastering feedback: 43 survey questions to improve customer loyalty.

Step 7: Close the feedback loop

Closing the loop means (1) acting on the findings—making decisions, assigning owners, changing product or process—and (2) telling respondents what you learned and what you will do next. A short email or in-app message (e.g. “Here are the top 3 themes from your feedback; we are prioritizing X and will update you by…”) turns the survey into a loyalty tool. If you never close the loop, people learn that surveys do not matter and future response rates drop. Plan the debrief before you launch: who analyzes, when, what decisions, and how you will communicate. Why closing the loop matters: Respondents who never hear back assume their input was ignored; that reduces trust and makes the next survey harder. Even a brief “Here is what we heard and what we are doing” improves retention and future response rates. Closing-the-loop template: (1) Thank respondents. (2) Share 2–3 headline findings in plain language. (3) State 1–2 actions you will take and by when. (4) Invite further feedback or point to a place they can stay updated. Keep it to a short email or in-app message; long reports are for internal use. For more on acting on feedback, see high-impact surveys: 12 best practices.

Strong vs weak execution

| Step | Weak execution | Strong execution |

|---|---|---|

| 1. Goals | Vague or multiple goals; many off-topic questions | One North Star decision; every question maps to it |

| 2. Questions | Long, jargon-heavy, all one type | Conversational, mix of types, under ~6–10 per path |

| 3. Logic | Linear only; everyone sees every question | Conditional logic; only relevant questions shown |

| 4. Invite | Generic link, no context or time estimate | Right channel; clear time and use of data |

| 5. Monitor | No tracking of drop-off or completion | Form analytics; fix friction and iterate |

| 6. Analyze | Raw data only; no summary or recommendations | Quantitative + qualitative; summary and owners |

| 7. Close loop | No follow-up to respondents or stakeholders | Act on findings; tell respondents what changed |

Tools and form builders

Running the 7 steps is easier with a form builder that supports conditional logic, multiple question types, and analytics. Look for: branching/skip logic (Step 3), unlimited or high response caps so you are not forced to cut questions, completion and drop-off tracking (Step 5), and export or integration for analysis (Step 6). AntForms supports conditional logic, unlimited responses, and analytics, and fits the conversational survey workflow without coding. Choose a builder that lets you iterate quickly (e.g. edit logic and questions without rebuilding the whole survey). For more on what to measure, see form analytics: what metrics actually matter. When to run a survey (vs other methods): Surveys are ideal when you need structured feedback from a defined audience at a point in time—e.g. post-purchase, post-support, or a quarterly pulse. For ongoing, unstructured feedback, consider support tickets, reviews, or community channels; for deep qualitative insight, consider interviews. The 7 steps still apply when surveys are part of a mixed-methods approach.

Common pitfalls

- Skipping the goal: Launching without a single clear decision leads to long, unfocused surveys and low completion. Write the North Star first.

- Too many questions: Pushing past 6–10 questions (or the equivalent with logic) often tanks completion. Cut to what serves the goal.

- No conditional logic: Showing everyone every question wastes time and causes drop-off. Use branching for screeners and follow-ups.

- Wrong channel: Sending a long survey via a noisy channel (e.g. cold social) or a short pulse via email can misfire. Match channel to length and audience.

- Ignoring drop-off: Not checking where people leave means you repeat the same friction. Use analytics and fix the worst drop-off points.

- No analysis or ownership: Collecting data without a summary or assigned owners means nothing changes. Plan the debrief before launch.

- Not closing the loop: Failing to tell respondents what you learned and what you will do hurts trust and future response rates.

Pre-launch checklist

- One North Star goal written; every question mapped to it

- Conversational questions; mix of types; path under ~6–10 questions (or use logic to shorten)

- Conditional logic added for screeners and relevant follow-ups

- Invite channel chosen; message includes time estimate and how data will be used

- Form analytics or tracking in place to monitor completion and drop-off

- Debrief planned: who analyzes, when, what decisions, how you will tell respondents

Implementation: from goal to launch

A practical sequence to go from zero to live survey:

- Write the North Star goal (one sentence) and get sign-off.

- List the decisions the survey will inform; drop any question that does not map.

- Draft questions (conversational, mix types, one idea per question).

- Add conditional logic (screeners, follow-ups, skip irrelevant sections).

- Estimate length (e.g. 2–3 s per closed question, 15–30 s per open-ended); cut if over ~2 minutes.

- Choose channel and draft invite (time estimate, how data will be used).

- Set up analytics (completion, drop-off by question).

- Plan debrief (who, when, what decisions, how you will tell respondents).

- Launch and monitor; fix friction where possible; after close, analyze and close the loop.

Run a quick friends-and-family test before launch: have 2–3 people take the survey and note where they hesitate or misinterpret. Fix those spots; it takes minutes and often catches wording or logic issues that analytics would not explain. Using a form builder with conditional logic and analytics (e.g. AntForms) streamlines steps 3–8. For a design-focused checklist, see high-impact surveys: 12 best practices.

Data quality and respondent trust

High-quality results depend on respondent trust and clear expectations. In the invite and at the start of the survey, state how long it takes, how you will use the data, and who will see it (e.g. “Results are anonymized and used only to improve our product”). Avoid leading questions and loaded wording; one idea per question reduces ambiguity. If you collect personal or sensitive data, explain why and how it is protected. Closing the loop (Step 7) reinforces that the survey mattered and encourages honest participation in the future. Keep response options balanced (e.g. full range from negative to positive) so you do not steer answers. For demographic and sensitive-question guidance, see demographic survey questions: guide, examples, and best practices.

Integrating surveys with other feedback

The 7 steps apply whether the survey stands alone or fits into a larger feedback system. You might run a pulse survey (short, frequent) alongside annual or quarterly deep dives; use the same North Star and question set for consistency. Surveys can complement support tickets, NPS or CSAT programs, and product analytics: survey data explains the “why” behind behavior. When you analyze (Step 6), triangulate with other sources (e.g. “Survey says X; support tickets show the same theme”). For customer loyalty question sets, see mastering feedback: 43 survey questions to improve customer loyalty.

Frequently asked questions

What are the steps to conduct an online survey?

Set clear research goals, design conversational questions (mix types; keep short), add conditional logic, invite participants via the right channel, monitor response and drop-off, analyze results (quantitative and qualitative), and close the feedback loop by acting on findings and telling respondents.

How many questions should an online survey have?

Aim for under 6–10 questions when possible; research shows surveys with more than six questions often see big completion drops. Use conditional logic so each respondent sees only relevant questions, keeping their path short.

What is a good response rate for an online survey?

Response rate above 30% is often strong for voluntary surveys. Conversational design, the right channel, and a clear promise (e.g. time estimate, how results will be used) can push completion higher.

How do I close the feedback loop after a survey?

Act on the findings (make decisions, assign owners), then tell respondents what you learned and what you will do next. A short email or in-app message reinforces that the survey mattered and builds loyalty.

What is conditional logic in surveys?

Conditional logic (branching or skip logic) shows follow-up questions only when relevant (e.g. if they have not used the mobile app, skip mobile UI questions). It shortens the path and improves completion. Use it for screeners and for follow-up questions that only apply to certain answers.

Summary and next steps

Summary: Conducting an online survey in 2026 boils down to 7 steps: set a clear research goal, design conversational questions (short, mixed types), add conditional logic, invite via the right channel, monitor completion and drop-off, analyze quantitative and qualitative results, and close the loop by acting and telling respondents. Strong execution at each step—especially goal clarity, short path, and closing the loop—turns surveys into a repeatable source of actionable feedback. Use the strong vs weak execution table to self-check, and the pre-launch checklist before every launch.

Next steps: Write your North Star goal and draft questions that map to it. Add conditional logic and choose your invite channel. Run a quick test with 2–3 people before sending to the full list. Before launch, set up analytics and plan the debrief. After launch, fix friction where drop-off is high and close the loop so the next survey gets even better response. Use a form builder like AntForms for conditional logic and unlimited responses. Revisit high-impact surveys: 12 best practices for design depth and how to build surveys that get 80%+ response rates for response tactics. Treat the 7 steps as a cycle: each survey you run gives you data to improve the next one (better questions, better channel, better closing the loop).

Key takeaway: Conducting an online survey in 2026: goal first, conversational design, conditional logic, right channel, monitor, analyze, then act and close the loop.

Try AntForms to build and run online surveys with conditional logic and unlimited responses. For more, read high-impact surveys: 12 best practices, how to build surveys that get 80%+ response rates, and form analytics: what metrics actually matter.